Technologies Which Made Crypto Possible

How cryptography, P2P networks, consensus, and smart contracts made cryptocurrency possible — and how TRON extends that foundation.

Cryptocurrencies are among the most revolutionary technological breakthroughs of our time but they sure as hell didn’t come from thin air. Just as the airplane wouldn’t be possible without discovering and harnessing fire, and the internet wouldn’t be possible without decades of network research and development, this dream of decentralized digital money could only come to fruition by knitting together a slew of foundational technologies that had been developed over many years.

This post traces the technology lineage, explaining how each development brought us one stage closer to a complete blockchain solution from 1980s tech to now. We will investigate the technology behind cryptocurrencies, as well as the powerful hardware and software that make them possible — not to mention inevitable: from the core principles of cryptographic primitives (i.e., modern encryption techniques) for securing transactions, to the P2P networks liberal enough to sustain this level of decentralized trust.

Building Blocks: Public Keys and Digital Signatures

The journey towards decentralized money started with one question: how can you conduct a secure trade with somebody over an unsecure channel, without having to trust anybody else? The solution was cryptography and specifically — the creation of public-key cryptography in the 70s/80s.

In 1976, Whitfield Diffie and Martin Hellman changed the game forever when they published their work on asymmetric cryptography. Unlike earlier symmetric ciphers which had all used pairs of parties who shared secret keys, the protocol would enable them to communicate securely over an insecure channel. It was a discovery that allowed two parties to generate a secret key without ever actually having to send it back and forth itself — a solution to the problem of key distribution that had plagued cryptologists for hundreds of years.

From this, Ronald Rivest, Adi Shamir and Leonard Adleman were later able to design the RSA algorithm in 1977 (from their family names). RSA was the first practical public-key cryptography scheme, employing prime factorization as its mathematically hard problem. With each such pair of keys, there’s a public key that — once the algorithm creates one for you — you can share with anyone in your address book; it allows them to send you messages but doesn’t allow them to unscramble what they receive from you. This asymmetry eliminated the need for secure key exchange and enabled secure communication between any two parties without prior arrangement.

The effects of public-key encryption were far larger than just cryptographic. It introduced a foundation for digital signatures — a technique to prove that an electronic message is authentic and unaltered. The digital signatures provide a means, along with cryptographic hash functions, to secure the underlying transaction methods throughout blockchain use and prevent users from leaking their private keys. This is the technology that secures cryptocurrencies, ensuring that only the owner of a particular digital wallet can spend its value.

Digital signatures then gained the attention of a formal standard in 1993 which specifies a common format for building and verifying digital signatures to be used irrespective of the system. Digital signatures prevent double-spending in blockchain systems, though they are often overlooked in discussions about blockchain technology.

Hash Functions and Data Integrity: Platform to Immutability

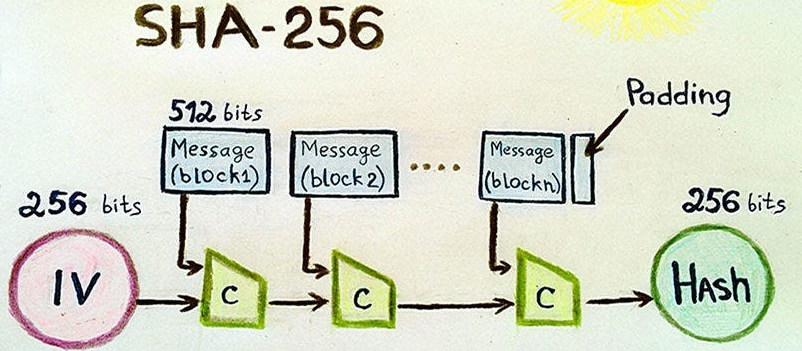

The authentication issue can be addressed with public-key cryptography, but other methods are needed to preserve data integrity. That’s where cryptographic hash functions became necessary, because they provided a mathematical way to produce digital fingerprints from data. You can give these functions an input of any size and they will produce an output of a specific size, usually 256 bits for SHA-256. While the output appears random, it is deterministic — every time you input the same data, you get the same output.

Attempts to create secure hash algorithms began in the 1990s. The Secure Hash Algorithm is a family of cryptographic hash functions that the NSA published for use as a U.S. federal standard in 1993. However, SHA-1 began showing vulnerabilities and systems transitioned to SHA-256, which became the standard for cryptographic hashing. And because it passes those tests, SHA-256 is an excellent hash function to use within blockchains: it’s efficient and fast to compute, produces uniformly distributed outputs (if you have two different inputs you will be very unlikely indeed to get the same output), and it is designed to be irreversible — figuring out what the original input was if you only have the hash is a really hard computational problem.

Hash functions can be used in various important ways in blockchain systems. First, each block has a hash of the previous block, chains them, and creates the relations that cannot be changed. This is possible because if we change just one block’s hash, we should recalculate all hashes of all previous blocks. Thus, blockchain tampering becomes implausible economically: the attacker would need to own over a half of the network’s overall computing power to rewrite the ledger. Second, hash functions enable the existence of Merkle trees.

The tree structure allows verifying single transactions inside the block without downloading the entire blockchain. Last but not least, the deterministic property of hash functions is used in proof-of-work consensus protocols as well. Miners should find hash values fulfilling certain pre-specified properties. This is costly in terms of computation power, but it is a fair and decentralized way: based on this process, the next block in the chain applicant is randomly chosen, and the network is not controlled by one person. Thus, whoever is chosen is unpredictable.

This demonstrated the ability of P2P systems to facilitate direct transfers between users. Napster (1999), founded by Shawn Fanning and Sean Parker, showed that music files could be transferred directly between users in a peer-to-peer manner. While Napster was ultimately shut down in the courts, it did prove that a decentralized network could gain massive amounts of user traction and still work. The mainstream adoption of P2P systems like BitTorrent encouraged the trend toward decentralized architecture as a practical solution for large-scale applications.

Revolutionary from a software engineering perspective, P2P networks established principles that have become blueprints for how blockchain technology should operate. They were the first to demonstrate that consistency could be maintained even without central coordination. Second, they established that networks can be resilient to node failure, as the loss of a few participants does not lead directly to the collapse of the network. They’ve also shown that distributed systems can scale out by horizontally growing, with increasingly more throughput as nodes are adding to the network.

Blockchain networks are P2P in nature and provide a few benefits. All nodes are fully equipped with the blockchain, which is redundant and has no single point of failure. New nodes can join by duplicating the blockchain of any live node, causing the entire system to automatically repair itself when network partitions heal. The distributed architecture also sets a high bar to censorship, because there is no single central entity that can be forced under duress — whether through armed soldiers, swarms of lawyers, or governments exercising monopolistic authority — to shut parties out or prohibit transactions.

This is an important problem in a distributed setting as parties involved aren't inherently trusted and common agreement on the transactions must be achieved.

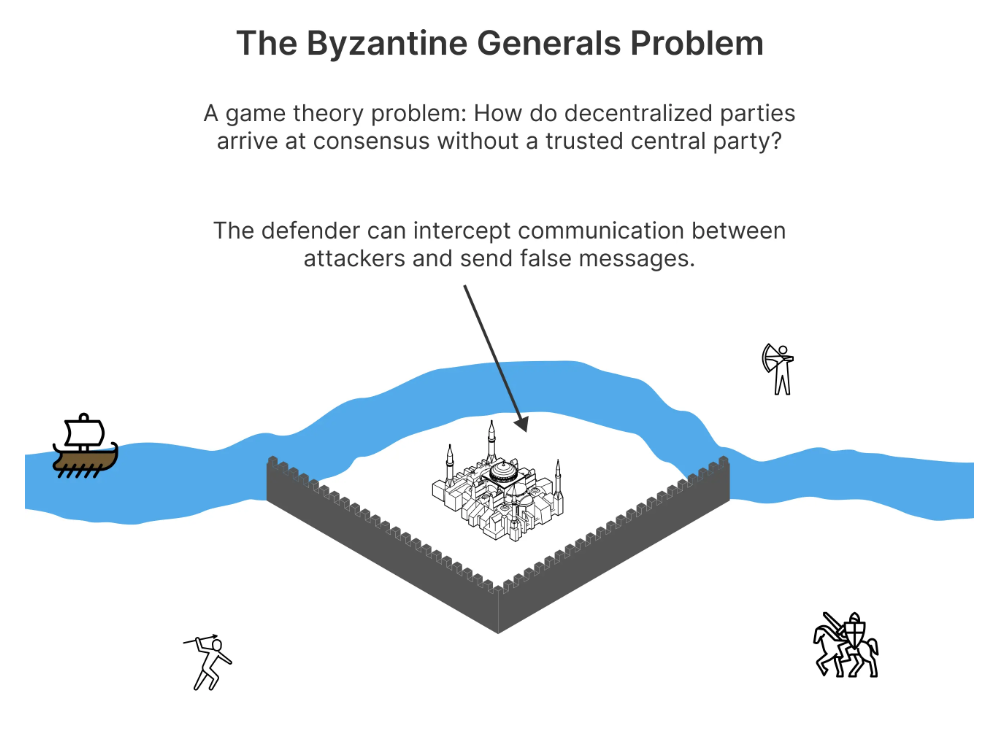

This is known as the Byzantine General’s Problem, and it was first defined in 1982 by Leslie Lamport together with (or perhaps really by) Robert Shostak and Marshall Pease. The issue is that a group of generals want to attack a city and need to be able communicate with one another about when/how to do so, but some of them may be traitors who will send conflicting communication and disrupt consensus.

The Byzantine Generals’ Problem represented one such fundamental challenge — how can distributed systems agree on a decision when some of the participating nodes might be either faulty or malicious? This problem arises more concretely in networks with transactions recorded on blockchains, without a central party to decide disputes about the validity and booking order of transactions.

Some of the early consensus algorithms include Practical Byzantine Fault Tolerance (PBFT), invented by Miguel Castro and Barbara Liskov in 1999. With PBFT, a distributed system could still function correctly with up to one-third of the nodes being faulty or compromised. But all nodes must talk to every other PBFT node, so it does not scale well.

The first involved using proof-of-work (PoW) for consensus in blockchains. Proposed in 1993 by Cynthia Dwork and Moni Naor as an answer to the problem of email spam, PoW is a proof that shows that users performed some computation-intensive operations (as evidence of effort needed to produce it) before serving the network. With Bitcoin, “miners” compete to solve a cryptic puzzle and add the next “block” of transactions to the chain in return for newly minted bitcoins.

PoW posed a deterrent to the consensus problem, as it entailed cost for all players trying to attack the network. Tampering with the blockchain would require an attacker to control more than half of all computing power on the network — a proposition that would be astronomically expensive. Even if successful, it would be as shooting themself in the foot to attack the properties of what holds any value at all in a low-level system; putting themselves out of business.

Smart Contracts: Automating Trustless Agreements

Bitcoin was a proof-of-concept for decentralized currency, and its scripting language was intentionally limited to avoid potential security vulnerabilities. Smart contracts were introduced in 1994 as self-executing documents with clauses written directly into code, but they didn't become practical until Ethereum made the concept a reality in 2015.

Smart contracts transform traditional legal agreements. Once triggering conditions are met, smart contracts execute automatically without human intervention. This automation eliminates intermediaries from trading, decreases processing costs and enables more complex financial instruments that were previously impossible.

Ethereum, by Vitalik Buterin, successfully brought smart contracts to the mainstream blockchain approach thanks to its practice of being a Turing-complete programming language. Developers of cryptocurrency protocols and applications would write smart contracts in a high-level language, like Solidity, which was compiled to unchangeable bytecode that the EVM (and no one else) could interpret. While many use-cases for blockchain had previously been linear transactions, this leapfrogged straight to Decentralized Applications (dApps) running the gamut from DeFi to supply chain.

There were several engineering concerns which had to be addressed in the implementation of Smart Contracts. For the EVM, two major requirements included that code must execute consistently across all nodes, and for the same input it should always generate the same output. Secondly, the system should provide mechanism(s) to prevent infinite loops and denial of service attacks. Third, smart contracts also needed to be connected to external data through oracles, which are systems that bring real-world information into blockchain applications.

Smart contracts also brought additional security issues. Smart contracts can’t be patched once they’ve been deployed (unlike normal software) and so have a lot of emphasis on testing / formal verification. Smart contracts are also immutable, meaning that when a bug exists in one, it can’t be changed without leading to infamous episodes like the 2016 DAO hack — which resulted in a contentious hard fork of the Ethereum network.

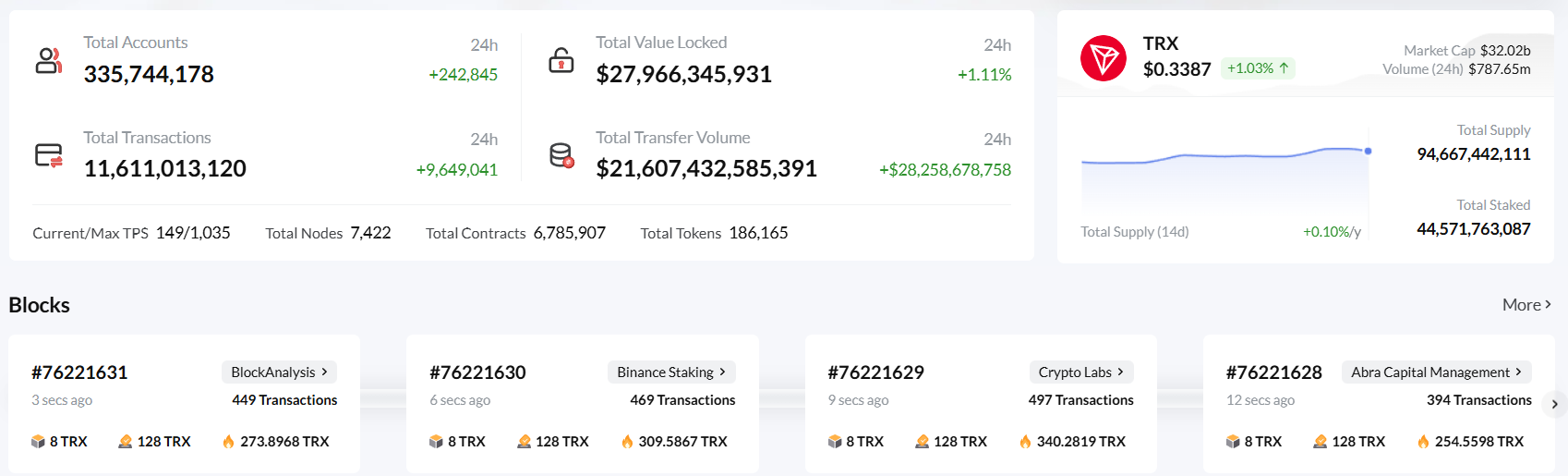

The TRON blockchain emerged from these groundbreaking developments to become the next generation of blockchain infrastructure. Founded in 2017 by Justin Sun, TRON aims to challenge the scalability problems faced by earlier blockchains without compromising security and decentralization.

To make it more efficient and user-friendly, TRON is built using various new designs. The network uses the Delegated Proof of Stake (DPoS) consensus algorithm, with token holders voting on witnesses that secure the network. This approach can save much Energy cost and maintain security in terms of economic incentives (instead of PoW schemes).

One of the features that TRON is best known for is how it handles resources, with fees divided between Energy and Bandwidth. Energy is required for smart contract execution and Bandwidth is for regular transactions. This separation allows users to customize their costs based on which applications of the network they would like to use, hence making a network applicable for various kinds of application.

What is TRON's Energy model? TRON allows users to freeze their TRX tokens in exchange for Energy, which they can then use for free smart contract transactions. This is what allows a predictable pricing model for both developers and consumers. For those that aren't comfortable freezing a large chunk of TRX, they can rent some Energy on the TRON platform to use it temporarily for certain operations.

TRON's technical architecture includes several features that make it a scalable platform. The network can process thousands of transactions per second, far exceeding Bitcoin's roughly seven transactions per second. TRON achieves this high throughput through optimized consensus methods and fast block generation.

The network has also been developed with interchangeable programmable languages for developing smart contracts like Solidity, thereby appealing to Ethereum developers. TRON and Ethereum interoperability is another key feature, with full compatibility across TRON-based and ETH-based dApps to lower migration cost and improve user efficiency.

Energy Leased Services: TRON Resource Allocation Optimized Use Case

The Energy leasing mechanism for TRON is like a kind of resource sharing represented by an advanced approach which benefits both Energy suppliers and the consumers. With the freezing of TRX, users have the opportunity to obtain Energy and use it or to lease / sell their resources to developers lacking computational capacity.

Renting Energy on TRON is better than fee-based approaches in the conventional sense. It enables entry into getting just the right amount of Energy for specific tasks with no long-term exposure to holding tokens.

Energy-leasing service also brings business cost-effectiveness for dApps running on TRON. Renting Energy (vs burning TRX) can save a lot of investment for companies. This is why TRON has become economically powerful, with use cases including cryptocurrency exchanges, payment networks and DeFi products.

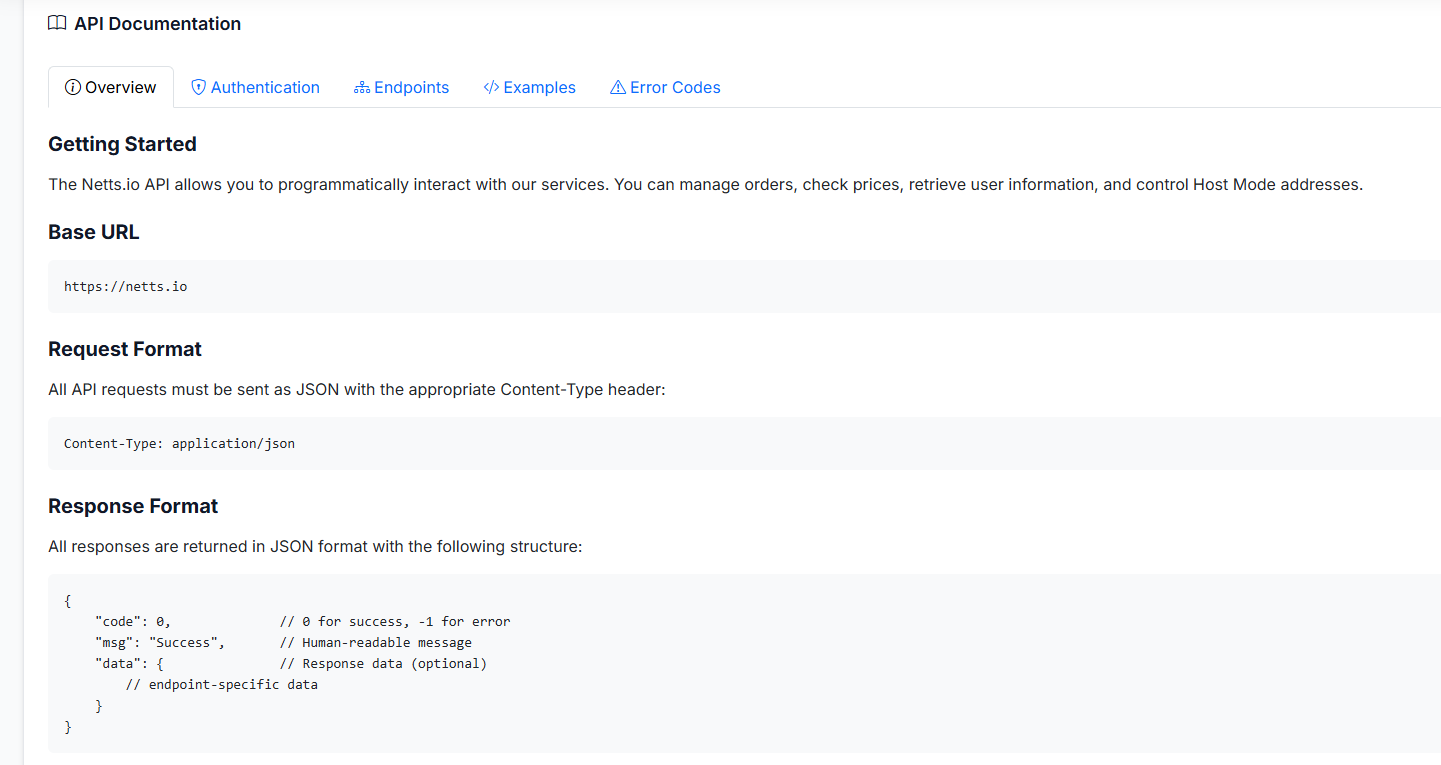

Netts API v2: Simplifying Integration

To make Energy rentals easier to integrate into software, platforms like Netts have emerged, offering powerful APIs that handle much of the complexity for developers. With the Netts API v2, developers and projects have powerful tools to manage TRON Energy resources more effectively.

The API gives applications up to the minute Energy prices, so they can decide when and how much Energy to hire. This pricing script itself also helps to lower costs (by helping identify the cheapest way to get Energy resource). This automated pricing system provides three key benefits: it monitors financial market trends to prevent overpaying, optimizes resource allocation, and provides predictive insights.

With the Netts API v2, this can be deployed as an enterprise-grade solution for multiple applications using TRON. It is also possible that automatic power management on USDT trading can reduce the cost of exchange operation. DeFi applications can go further, becoming more efficient in their smart contract interactions by budgeting funds between calls. Energy leasing may enable payment processors to reduce transaction cost. Automated Energy control is particularly beneficial for trading bots performing high-frequency intraday derivatives trading to ensure stable operation.

Conclusion: Convergence of Technologies

Cryptocurrencies are the result of decades of bringing together developments across different IT fields. Whether it was the encryption groundwork of the 1970s and 1980s, or peer-to-peer networks in the 1990s, each technology represented progress toward what became blockchain. Consensus algorithms were the response to the Byzantine Generals' Problem, providing a way for reliable agreement within distributed systems. Smart contracts brought the complexity of agreements between more than one person and put them on autopilot, so that their use could be more than for simple transactions on blockchains.

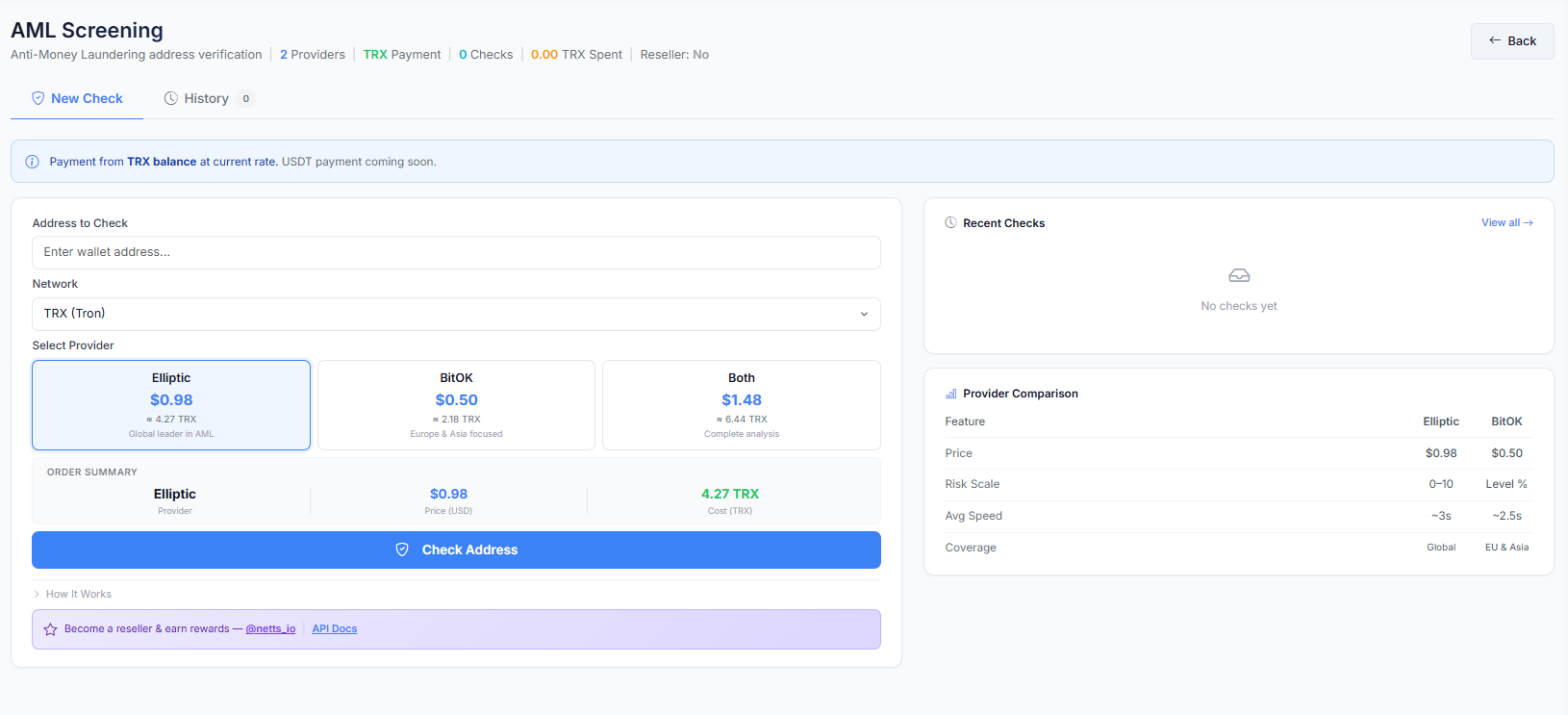

TRON builds on these foundations while implementing new ideas like the Energy rental model, which increases both efficiency and network accessibility. The Netts API v2 demonstrates how cutting-edge tools can simplify this process, allowing developers and businesses to leverage advanced capabilities like automatic delegation and real-time performance monitoring without requiring deep blockchain expertise. AML checks integrated into the service:

As blockchain technology evolves, new generations will build upon these foundational technologies that made cryptocurrency possible. This cryptoeconomic, decentralized and automated nature that took years of study to develop has laid a solid foundation for defining the future wave of digital finance. The game-changing technology that enabled cryptocurrency continues to evolve, with new innovations emerging daily, and the blockchain revolution has only just begun.