Optimization of Blockchain: Main Bottlenecks

Why blockchains stall: the scalability trilemma, mempools, gas, consensus costs, and the engineering trade-offs behind every “we’ll fix it later.”

It may look to the average user like crypto blockchain is this seamless, perfect machine with no imperfections. And yet they only have flaws to the extent that you use them — like transactions getting stuck or when servers fall over, or there was a bug, etc. What is behind all of these? The blockchain to the uninitiated is a mystical digital ledger, an ephemeral creature that lives anywhere and nowhere, immediately processing transfers of value with nary an error.

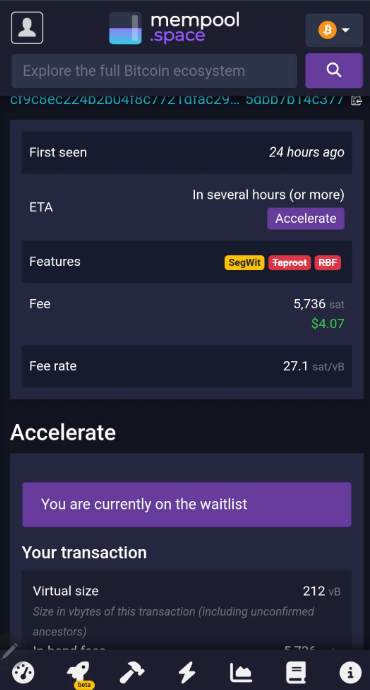

But the truth is that it is much more earthbound. When a transaction gets stuck in the mempool for hours, making a user miss out on an important DeFi liquidation or when network congestion during a popular NFT mint erases all hope of completing a profitable trade due to gas fees surpassing the entire value of the transaction, those are not simple software bugs. They are the side effects of inherent bottlenecks every decentralized network encounters.

Not only are these problems not due to lazy programming or lack of effort. On the other hand, some of the world’s smartest computer scientists and cryptographers are working around the clock to crack them. But they have run up against the hard limits of physics, hardware capacity and the inevitable trade-offs of distributed systems. To learn why your transaction is stuck, or why the network is slow, we need to take a peek under the hood at the gears and levers that make this machine run — including the friction that slows it down.

The Scalability Trilemma and Limits of Throughput

The scalability trilemma is probably the best known bottleneck in blockchain. Vitalik's triangle theory of blockchains states a blockchain can only have 2 of the following: decentralization, security and scalability. You can't have them all at max. This is not a rule algorithmically encoded, it is a natural constraint in how distributed networks operate. If you want a network that is very fast (scalable) and secure, you often have to make trade-offs where decentralization is reduced by having less nodes verifying transactions. You have a trade-off: if you want it to be both decentralized and secure, such as Bitcoin or Ethereum, you give up speed. It is why Bitcoin can do around 7 transactions per second (TPS) and Ethereum does about 15 — 30 TPS at the base layer, while Visa can handle tens of thousands.

Here, the bottleneck is the block size and time. An easy, obvious solution for increasing throughput would be to make the block size larger to cram more transactions in, or reduce the block time so we mine blocks faster. But here we hit a physical limitation: propagation delay. When a miner or validator discovers a new block, that block has to be sent out to every other node in the network. This data can travel through the world’s internet cables, fiber optics and routers. There are limits of course, speed of light, bandwidth of internet infrastructure. If a block is too heavy, it can take longer to download and verify. Too many blocks too fast might be produced simultaneously by different miners at the same time as the second-to-most-recent has not yet propagated and lead to "forks" or "orphan blocks" (or Ethereum’s "uncles").

A high uncle rate indicates that the network wastes computing power on blocks that do not enter the main chain, which weakens security as honest hashing is divided. A limit on TPS is therefore, in effect, a network synchronisation limit. In addition to this, every block has a “gas limit” or “block weight” limit. This is not only to bound throughput but protect against Denial of Service (DoS) attacks. If there’s no cap on how much computation a block can contain, an attacker could spam the network with intricate transactions that take forever to process and freeze the whole system.

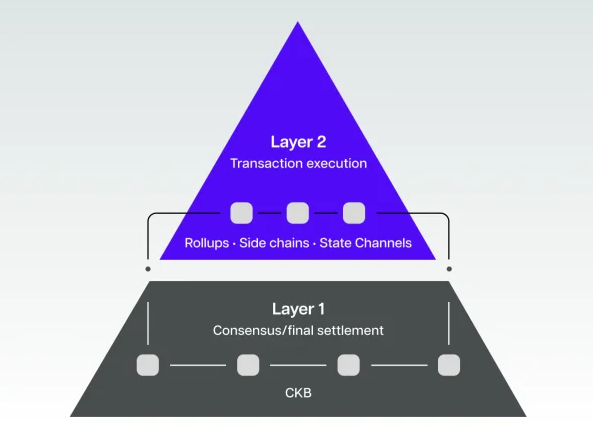

Layer 2 and Scalability Bottleneck Due to Data Availability

To work around the restrictions of Layer 1, the industry has invented Layer 2 solutions - "rollups" in particular. These protocols perform transactions off-chain, gather them into a block and then return some compressed version or cryptographic proof to the main chain (Layer 1). It looks like a perfect idea, however it incurs an additional burden: DA (data availability). Even if the computation is occurring off-chain, enough information to “rebuild” the rollup state must be present on-chain so anyone can check it.

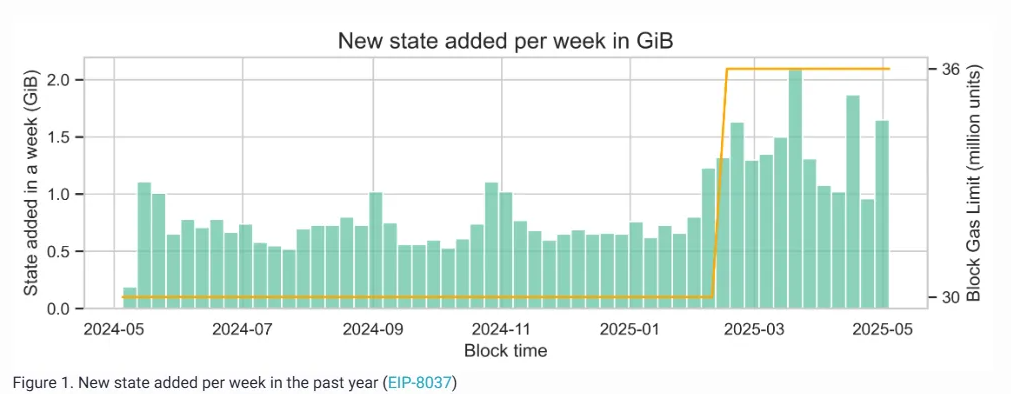

Block space on the main chain is precious and scarce. For example, it would be expensive to post huge amounts of call data to Ethereum. That’s also why we’re seeing proposals like EIP-4844 (Proto-Danksharding) propose the notion of “blobs” — a new data storage type that is cheaper and ephemeral, designed only for rollups. But, even with blobs there is a limit to the amount of data that you can push through the network without having bloated nodes. When the DA layer gets full, the cost to use those Layer 2s goes up significantly since rollups are racing to get in on that limited space to post their batches. This gives rise to a market where the price of a transaction on some cheap L2 is conditioning directly on the congestion of an expensive L1. It is a tradeoff between committing to the security and cost of securing (L1 posting) and actually using.

The Burden of History: State Expansion and Storage

One of the most important — and equally forgotten — bubble is some "state bloat". A blockchain consists of an identical copy of every transaction that has ever taken place. With more people using the network, this history expands. But it’s not just the history; it’s the “state” — the current balance of every account and holding of every smart contract. A node has to be able to verify a transaction in order for it to know what the current state is. It needs to somehow ensure that Alice does in fact possess the 10 tokens she wishes to send to Bob. The larger the state gets, more RAM and SSD storage is needed to access it quickly.

If state is too big to fit on consumer-grade hardware, only large datacenters can afford to run nodes. This hurts decentralization. Optimizing this is incredibly difficult. You can’t just prune old data out, because it depends on the chain’s entire history back to the Genesis block. Developers are experimenting with “stateless clients” and "state expiry”, recording old data and using only as much proof of it instead. But these are fancy-pants cryptographic solutions that take years to deploy securely. We’re always fighting the data hoarding.

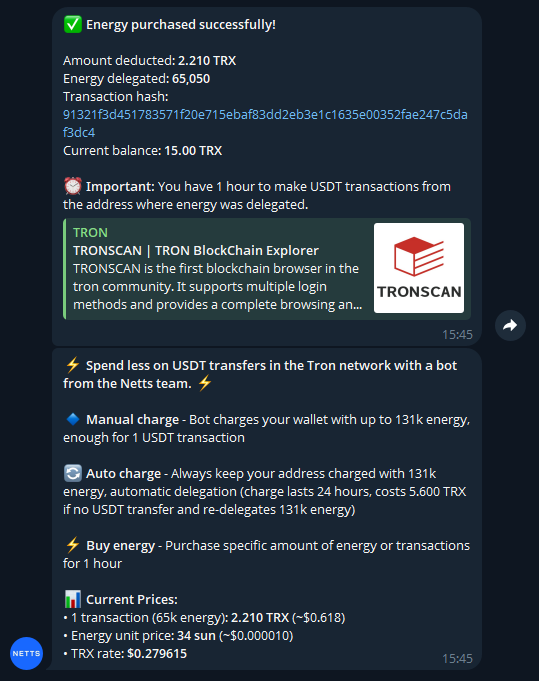

Every contract you deploy, every token you mint is weight that a node must carry for eternity. This resource consumption is the reason why some networks adopt resource models such as Energy and Bandwidth. Users would possibly require recharging Energy points to interact with a smart contract without spending tokens, or obtain TRON Energy with which transactions are processed much faster. Such models are an effort to charge correctly for the burden on the network - people who use a state must pay to support it. Without such mechanisms, the “tragedy of the commons” would result in a chain which was filled up and became unusable.

Consensus and its overhead through consensus mechanisms

At the heart of every blockchain is a consensus mechanism — how thousands of strangers can collectively agree on a single truth. Regardless of whether one talks about PoW or PoS, consensus always involves some level of communication and computation. In PoW, the slowdown is largely due to how much energy and hardware it takes to secure the network. The challenge of the difficulty adjustment is to keep blocks coming at a rate which is neither too fast (security) nor too slow (speed). For PoS, being energy efficient, the bottleneck is conveyed in terms of the communication overhead.

Validators need to swap sets of messages constantly in witness about how blocks should be considered valid. As the number of validators scales up to grow decentralization, so too does the number of messages (exponentially). This “chatter” can overload the network. It’s a gargantuan task to optimize the consensus algorithm in order for it to scale comfortably to thousands of validators without grinding transaction speeds to a pavement-scraping crawl. It uses exotic mathematics and “signature aggregation” techniques (similar to BLS signatures) to condense thousands of votes into a single tiny piece of data. Moreover, the “finality” of a transaction — the assurance that it is irrevocable — also tends to be probabilistic.

In many chains, you have to wait a number of blocks to know for sure. This time-lag is due to the convergence of the network's consensus. It’s a security feature, not a bug, but it can seem like a bottleneck to the user hoping for confirmation. Furthermore, the "Nothing at Stake" issue of early PoS designs necessitated complicated "slashing" mechanisms to penalize misbehavior, thus further additional logic to process for every block. Another hidden bottleneck is “client diversity.” When everyone runs the same software client (for example, Geth), a single bug can take out the whole network. It is important to encourage ecosystem diversity regarding clients in order to provide resilience, but this adds a lot of development overhead.

The Hidden Tax: MEV And Execution Bottlenecks

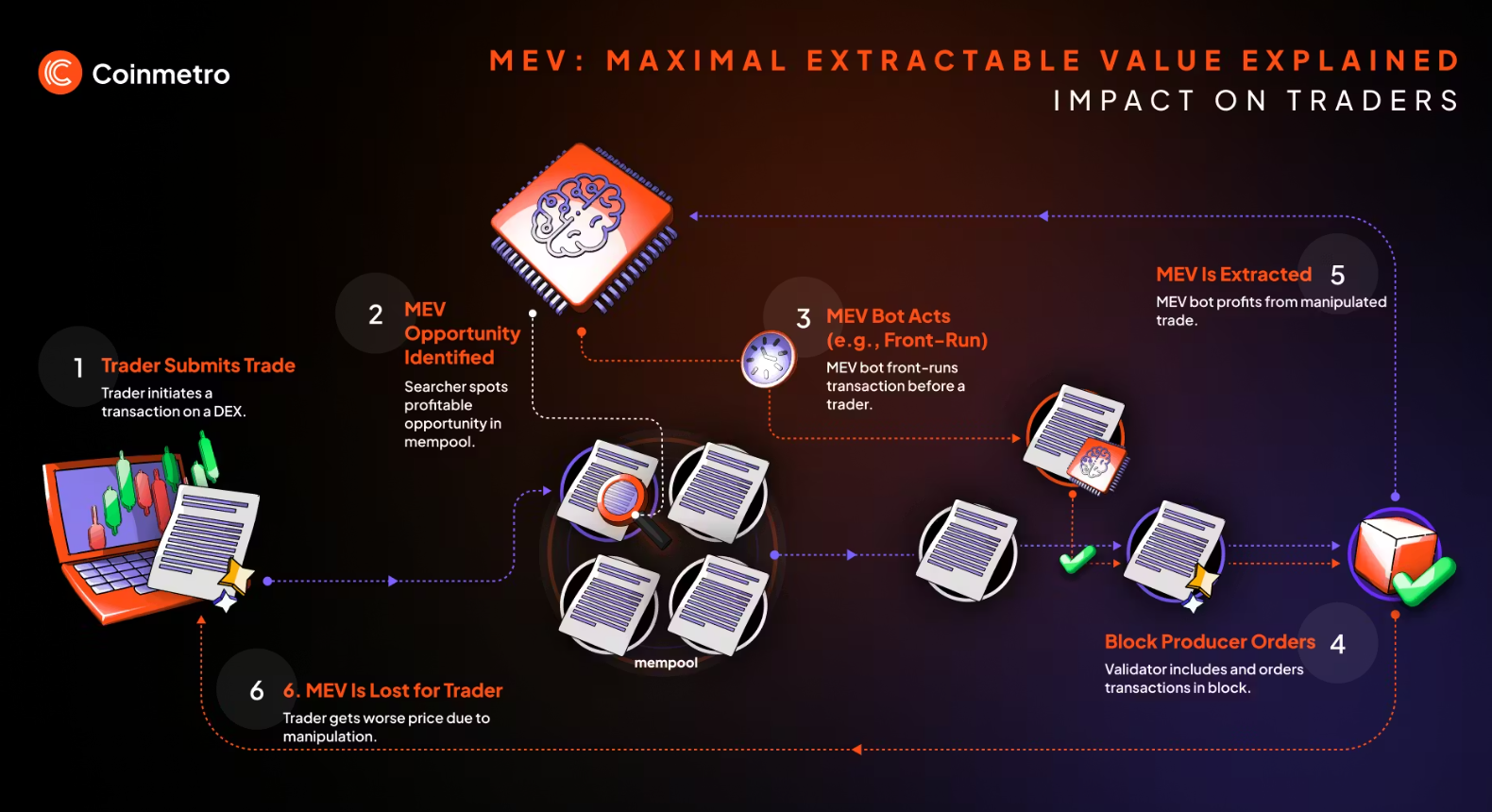

The Virtual Machine (VM): This is the execution environment for smart contracts. The Ethereum Virtual Machine (EVM) is the norm, but it was not designed for high-throughput parallel operations at first. It receives transactions one at a time and processes them in the order received. If one transaction is really complicated and takes forever to compute, everyone else has to wait in line. This is equivalent to one checkout line at a grocery store. Contemporary blockchains are attempting to achieve “parallel execution,” in which non-conflicting transactions (for example, Alice paying Bob and Charlie paying Dave) can be processed simultaneously by different CPU cores. But that is sometimes easier said than done. It is a challenging computer science problem to identify which transactions interfere in real time.

And asides from execution speed, there’s the MEV bottleneck. Since most transactions can be seen upon being submitted to the mempool, advanced bots can front-run/ sandwich user transactions by profiteering. This is in effect a hidden tax on the part of users, leading to worse exchange rates and failed transactions. It's flooding the network with spam of bots that are fighting each other. MEV solution is one of gradient problems in blockchain optimization. Approaches such as “proposer-builder separation” work to factor the determinant implicitly, but it remains an ongoing inefficiency leading to a user experience that sucks.

The Fragmented World: Interoperability Challenges

With the transition to the multi-chain world, a new bottleneck has arisen — interoperability. We’ve built little islands of value. Transferring assets from Ethereum to Solana or Bitcoin to a Layer 2 network requires “bridges.” These bridges are the weak points in the ecosystem, vulnerable to hacks and exploits. The "wrapping" of tokens is an additional layer of complexity and potential risk. The bottleneck in this regard is the absence of universal communication protocol. Every blockchain speaks a different language and has a different consensus on time and on finality.

Building a “trustless” bridge — one that doesn’t depend on a centralized custodian — is mathematically really difficult. It needs one of the two chains to operate a “light client” (say, verifying headers and proofs). This is also computation- and data-intensive. Aside from the hassle of moving liquidity across chains, this fragments the market and makes it inefficient. Users get stuck on one chain with no easy way to refill TRON Energy and move their assets where the best yield is, unless they weave through a web of complicated risk-prone protocols. Moreover, “atomic composability” — the idea that I can transact with multiple protocols at once in a single transaction — is lost when one moves across chains. You can't snap up a flash loan on Chain A to arb something weird happening on Chain B without considerable risk and delay.

The Human Touch: Governance and Upgradeability

Finally, the most frustrating bottleneck of all could be human: governance. Blockchains are software, and software needs to be updated. But in a decentralized network, how does one get to decide when and how the upgrade happens? The scheduling of a “hard fork,” an upgrade that is not backward compatible, involves agreement among developers, those operating nodes on the network and miners/validators as well as general community consensus. If there is disagreement, the chain can change direction, as it did with Ethereum and then its subsequent chain split into what are now two coins: Ethereum and Ethereum Classic.

This desire for social agreement stands in the way not just of change but also innovation. A platform-based tech company can roll out an update to its servers in minutes. If a blockchain requires to follow any function f, for even the most minimal of functions it becomes impossible for the chain to adopt new improvements fast. This “governance minimization” is frequently the objective, but in the early days, active governance is essential to work through bugs and improve protocol. The tradeoff along the dimensions of move fast and break things vs stability, immutability is always a source of friction. It’s what can turn some of the most crucial optimizations, such as an increase to the block size or even a change in the fee mechanism, into politically charged battlefields that slow progress down for years.

Conclusion

The bottlenecks of blockchain — scalability, data availability, state bloat, consensus overhead, execution speed, MEV, interoperability and governance are not arbitrary boundaries. They are the byproduct of an ambitious aspiration to build a trustless, decentralized and secure worldwide computer. Every optimization is a trade-off. Increasing block size hurts decentralization. Sharding makes security a much more difficult challenge. Parallel execution risks state inconsistency. So it was incredible to see 2026 level progress on Layer 2 rollups, data availability sampling and mingling, and zero-knowledge snarks let alone proofs in general a reality in the here and now by people thinking “out of the box” for a few years.

But thanks to human brilliance, we continue to slowly but surely rise above these physical and logical limitations. But users have to understand that the “friction” they’re feeling is the price for playing on a system based on laws of physics and strict consensus, rather than trust in a central authority. Like you could need TRON Energy to afford the transaction costs in a distributed network, industry needs a continuous supply of new innovation to scale these systems for the entire world.

For the case for people dealing with the intricacies of obtaining resources on TRON, they can use something such as the Netts Energy Charge Bot. Chargers gain Energy for transfer and rent ($) to users, when transferring tethers on Charge_bot (Telegram), in this way fees are reduced from USDT transfers. Users just need to choose a wallet and deposit the money into it in TRX, they can then use the intermediary currency to refuel Energy for one hour, so that you don’t have to burn high amounts of TRX when transferring. It sort of simplifies the process a bit: just pick a wallet, charge it, and make your transfer, with the bot also potentially working out approximate resources spent. It's a tiny but valuable tool to enhance the UX in an environment where resource counts!